AI systems that match or exceed human intelligence across a wide range of cognitive tasks could arrive soon, perhaps as soon as 2030. These wouldn’t be narrow tools that outperform humans at specific tasks like image recognition or playing chess; they’d be in principle capable of the full range of cognitive work humans do, from scientific research to highly complex reasoning to navigating social situations.

Importantly, human-level intelligence could be just the start. If AI systems advance to a point where they’re capable of improving themselves, this could trigger self-reinforcing feedback loops leading to systems far smarter than humans within a short timeframe.

We can only speculate about how far AI will advance, and how quickly. But even without knowing exactly what the future holds, we can be confident that advanced AI will have a dramatic impact on society for good or bad.

The closest historical parallel may be the Industrial Revolution. It brought unprecedented prosperity and technological progress, lifting millions out of poverty. But it also caused enormous disruption, upending entire industries, and massively accelerated the use of harmful fossil fuels. Its benefits were also distributed very unevenly, leading to long-standing changes to the balance of power and wealth within and between countries.

If we knew there was a good chance of another Industrial Revolution happening in the next 10 years, what steps would we take now to prepare? What questions would we want to answer in advance? What policies should we implement? What safeguards would we try to establish? That’s plausibly the situation we face with AI, except this time, once they really begin, the changes could come even faster.

What follows is a non-exhaustive overview of some of the new challenges that we will likely face in a world with advanced AI technology. By necessity, most of what we can say at this point (in mid-2026) is highly speculative, and there are huge uncertainties around when AI systems smarter than humans will come to exist. Nonetheless, the prospect is a highly plausible one, meaning it’s worth anticipating the challenges that may come with it.

It addresses some of the most important considerations about this topic, though we might not have looked into all of its relevant aspects, and we likely have some key uncertainties. It’s the result of our internal research, and we’re grateful to Bradford Saad and Fin Moorhouse for feedback and advice.

Note that the experts we consult don’t necessarily endorse all the views expressed in our content, and all mistakes are our own.

Resource spotlight

This article draws significantly from research by Forethought. If you want to dive deeper into these topics, we’d highly recommend consulting their work, especially this article.

Accelerated technological progress

AI’s potential for advancing the frontiers of science and technology is enormous. At the time of writing, for instance, the best AI models are able to fairly reliably perform complicated software engineering tasks that would take more than ten hours of human work, and even some that could take weeks. If and when AI reaches the point of rivalling humans in other core research capabilities, such as designing experiments and proposing research agendas, we could see a sharp acceleration in the rate of technological improvement. In fact, it’s been hypothesized that we could see the equivalent of the entire last century of technological progress in just one decade.

This is an exciting prospect in some ways, but it also brings enormous risk. We can think of technological development as akin to pulling balls from an urn. As we develop each new piece of technology, it’s like we’re reaching into the urn hoping to pull out balls that will help humanity. Many of these are straightforwardly beneficial, like vaccines and other medical technologies. But some balls can be highly dangerous, like nuclear technology. The trouble is, we have a limited ability to know whether technology will harm us or help us until we’ve developed it.

The scientific speedup that advanced AI may bring is like tipping this urn upside down, spilling thousands of balls onto the ground at once. If this happens, we’ll have little time to evaluate and prepare for each new technology before the next arrives, leaving us able to do little more than hope that one of these destructive technologies isn’t revealed.

But what could these dangerous technologies be?

Well, we can’t know exactly—but we do have some good guesses of the risks based on current technologies and trends. For one, AI may make the creation and deployment of contagious and lethal pathogens much easier, including by malicious actors. This includes the prospect of highly dangerous mirrored bacteria, a novel and likely catastrophic form of life. This could mean a greatly increased risk of catastrophic pandemics.

Biological risks aren’t just constrained to bad actors, either: history is littered with unintentional leaks from laboratories, and the dangers of these will likely scale alongside our ability to create more dangerous pathogens, provided we don’t take action to make these leaks less likely.

Other technological advances could also dramatically change society in ways we may not be prepared for. For instance, we could see technologies that let us better explore outer space, and potentially even settle beyond Earth. This prospect raises crucial questions, like how we decide ownership over territories and valuable resources in space. This isn’t a direct risk in itself, but competition over land and resources has historically been a significant source of conflict, so getting the governance of space right matters.

On top of this, there are many unknown unknowns. Technological progress is famously hard to predict in normal times, but if the rate of technological progress accelerates greatly thanks to AI, it becomes even harder to anticipate. There are many potential technologies with dangerous or destructive potential that we haven’t even considered yet, but that may become real before we’ve been able to grapple with their implications.

Transformation of knowledge and information

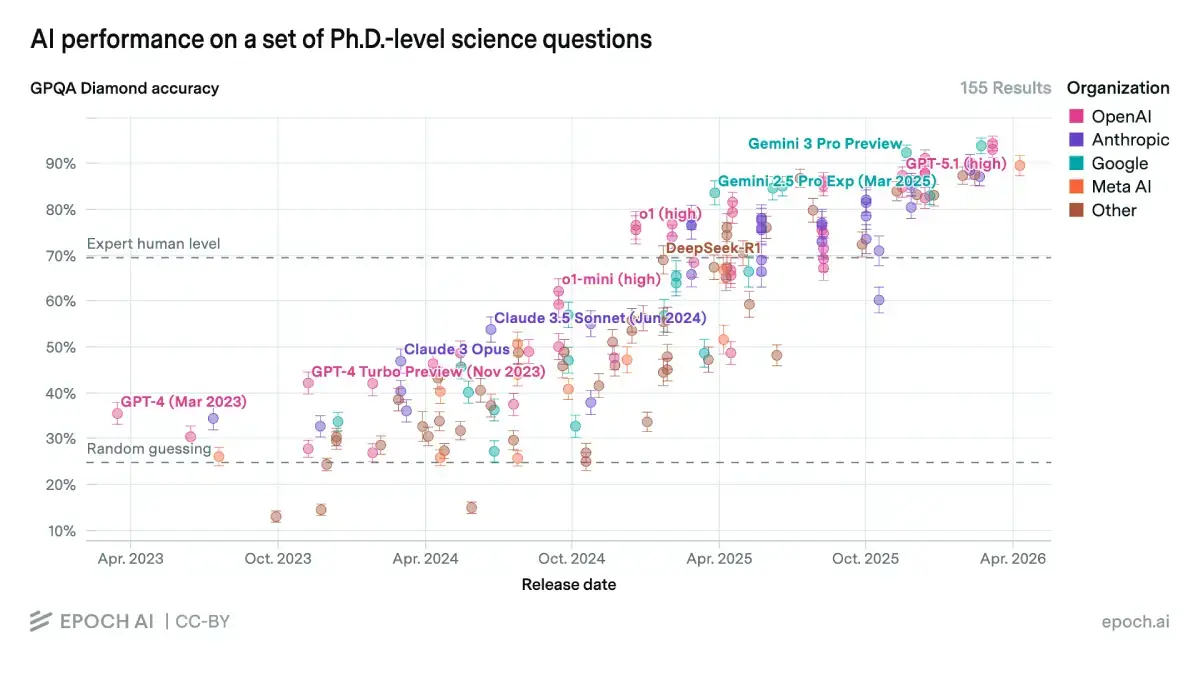

At the time of writing, the best AI models already have impressive capabilities. For instance, a test run by Epoch AI found that several models exceed the performance of experts in answering a set of PhD-level science questions. Though this doesn’t equate to a general level of scientific expertise, it looks likely that AI models will eventually possess an advanced understanding of the frontiers of knowledge in almost all domains.

And as AI’s capacity for understanding grows, so too will its ability to condense and explain information, as well as make accurate forecasts about the future. Alongside other epistemic advancements, it seems inevitable that we’ll increase our reliance on AI to provide us with information, bringing significant effects on how individuals and institutions form beliefs, reason through problems, and make decisions.

As with other forms of potential progress, there are clear potential upsides to this, such as the democratization of access to high-quality information and expertise, improving public understanding of complex issues. This could allow people to make better decisions both for themselves and collectively, such as through more informed voting decisions.

But there’s a risk that, as we become more dependent on AI systems for forming beliefs, we may become exposed to new vulnerabilities. One risk stems from the fact that AI systems may not have incentives aligned with providing accurate information to their users. We already see algorithms optimized for engagement spreading false information, and AI developers have similar financial reasons to create models which retain users at the expense of information quality. Again, this is not a foregone conclusion, but rather a risk worth bearing in mind.

Beyond AI’s potential unintended effects on our access to information, future AI systems may be misused by bad actors to intentionally spread convincing disinformation. For instance, highly convincing deepfakes may not just deceive people; they’ll also have the effect of making it harder to trust authentic videos and images. This is already occurring, and the problem is likely to worsen as AI-generated content becomes harder to distinguish from genuine material.

Beyond simple disinformation, future AI systems may be capable of manipulating and persuading people with enormous skill, generating highly convincing, personalized arguments for almost any claim. The potential harms here have precedent; for example, successful lobbying from tobacco companies has likely led to millions of deaths. The risk here is increased, however, with the potential for many groups, organizations, and individuals having access to highly convincing persuasion tactics.

Preserving power

Beyond AI’s influence on what we believe, the sheer potential capability of advanced AI systems raises distinct concerns about how that power could be used to entrench important outcomes, such as who governs and what values they govern by, over very long timespans.

The most direct worry is that advanced AI raises the prospect of a power lock-in. Superintelligent AI systems in particular could serve as powerful tools for surveillance, social control, and suppressing dissent. These capabilities may be sufficient for an authoritarian power to maintain and even increase its control to a much greater degree than we’ve seen in history.

On top of this, sufficiently advanced AI could allow even small groups and individuals to seize power through various means, even within established democracies. AI could dramatically lower the resources and personnel needed to execute a successful power grab, making it feasible for much smaller groups than was historically possible.

A closely related concern is that AI could let particular people or groups deliberately lock in their values, not just their power. A society’s values shape which practices it tolerates, promotes, and prohibits. Historically (and to this day), flawed moral views have led to the mistreatment of groups based on factors like ethnicity, gender, and sexuality. Advanced AI, particularly speculative superintelligent systems, could give those who control it the means to entrench a specific moral worldview against future revision, in a way no previous technology has allowed.

Even without malicious intent, AI could produce a similar effect. AI systems may internalize and preserve their programmed values, whether good or bad, long into the future. They’d do so far more reliably than humans, whose values often shift both within and across generations. As we come to depend on AI for more and more of our reasoning, judgment, and decision-making, the values built into those systems may prove resistant to change, whatever they are.

Though this value lock-in could help preserve many of the things we currently value, such as the condemnation of slavery and various types of discrimination, it could also present a barrier to further positive changes. This would not only prevent progress on already-identified areas for moral improvement, but may also prevent us from identifying additional areas for needed changes in our values.

If we contemplate just how much societal values have changed over the last century, we may have reason to worry. What would the world look like today if we had frozen the moral beliefs common in the early 1900s? Most would consider this a disaster. But our current moral values may look just as antiquated to those living in a century’s time. Value lock-in is far from inevitable, but AI’s potential to stall moral progress should nonetheless count as a concern.

AI sentience and welfare

So far, we’ve discussed the new risks posed by AI to society. But the development of advanced AI systems also raises profound questions about whether AI systems themselves deserve moral consideration in their own right. How plausible is it that future AI systems could have interests, experiences, or other properties that give us reasons to care about their welfare or give them rights? And if they do, how should we weigh those interests against those of humans? Should AIs be allowed to own property or be paid for their labor? Should they be free to disregard requests from humans?

These might sound like strange questions now, but they’re worth taking seriously. We don’t yet know what advanced AI systems will really look like, and humanity has a poor track record for working out what, and who, deserves moral consideration. As a result, many groups have been (and continue to be) unjustly harmed.

The question over the moral status of digital beings is particularly thorny, though. There’s little consensus among philosophers over even the most basic questions in ethics. More specific to this context, there’s significant disagreement about whether AI systems could have conscious experiences (including feelings like pleasure or pain), and about whether consciousness is even necessary for deserving moral consideration.

This question is additionally important because digital minds could be unique in ways that make them deserving of special moral attention. Specifically, digital minds will plausibly be both cheap to reproduce and vastly outstrip human brains in their ability to process and compute. This raises the prospect that there could be a vast number of AI “beings” deserving of moral attention, each of which could have the capacity to experience emotions (such as suffering) that exceed humans in their intensity, perhaps elevating their moral importance.

What makes these questions especially difficult is the risk that we miss the chance to answer them through careful deliberation. Economic incentives may push toward treating AI primarily as a tool, even if evidence suggests they ought to be afforded moral consideration. At the same time, growing public attachment to AI systems could generate electoral pressure for pro-AI policy that outruns careful moral reasoning. Either way, there’s a real risk we fall into these default answers instead of those we’d arrive at through careful reflection.

Resource spotlight

This expert forecast survey conducted in 2025 provides an excellent overview of current views on AI sentience, including when we might expect digital minds to exist, and what the risks and welfare implications could be once they do.

What can you do?

If you want to use your career to help tackle some of the important questions discussed here, what are your best options?

One obvious and crucial need in this area is research. There’s a huge gap between what we want to know about these problems and what we do know. This is inevitable in large part; there are many things we might not know until advanced AI arrives. But because there is currently very little work being done to map out many of the issues raised in this article, there is likely to be low-hanging fruit, meaning a capable researcher has a decent chance of making meaningful progress on important questions.

This may be a particularly pivotal time to work in this area, too. As mentioned above, society could default to suboptimal answers on all the important questions advanced AI systems raise, and there might only be a limited window to tackle them seriously.

Organizations doing research in this space include Forethought, Center on Long-Term Risk, Windfall Trust, the Center for Reducing Suffering, Eleos AI, Cambridge Digital Minds, the Sentience Institute, and Rethink Priorities.

This list is not exhaustive, however, the overall number of organizations focused tightly on the challenges raised in this article is fairly small.

But because these AI-related issues intersect with more established fields, it’s possible to work on these questions within think tanks and research organizations that work on these topics more broadly. For instance, there are many think tanks focused on issues like preserving democracy, improving international relations, and regulating technology. Though these organizations’ research agendas may not tightly map onto the issues raised here, there is likely lots of room for helpful work.

Helpful work in this area isn’t just limited to performing research yourself, though. Research organizations need all sorts of staff, like operations and communications specialists, to help them work more effectively and allow their research to reach the right people. Because there are so few organizations working in this space, founding new ones could allow for more researchers to get involved with these questions, and grantmakers can help expand the space by funding promising research efforts.

If you’re interested in research, you may also want to consider academia, particularly within philosophy, political science, economics, and other relevant disciplines. If you have sufficient autonomy over your research direction, you might be able to steer your work towards important questions, reducing the need to work in a specialized organization. In terms of which research topics might be most worth pursuing, Forethought’s various project ideas are a good place to start, and are worth consulting even if you’re just wanting to get a sense of what might be helpful.

On top of research, there’s also a need for policymaking and advocacy work, trying to raise awareness and implement policies we can already be confident in. Research organizations and think tanks often do outreach of their own, both to the public and policymakers, but dedicated advocates may be able to make further headway. Policy is an exceptionally broad path, with potentially promising roles both within and outside of government. We’d recommend finding our broader advice on careers in policy, which contains advice for both advocating for and implementing policy, if you’re interested in this route.

Finally, you might consider how you can use AI for good. Most of the problems identified here have beneficial “flip sides”. For example, AI could harm the quality of available information, but it could also dramatically improve it. Helping to advance these positive use-cases, for instance, by building tools and programs, could be another way to contribute.

Resource spotlight

Emerging Tech Policy Careers is a great resource for anyone interested in exploring policy work across the issues discussed in this article, especially for those in the US.

Recommended resources for taking action

Internships and fellowships

Here are a few great recurring opportunities for those who are interested in addressing the problems raised in this article with their careers, and are early-career or still studying:

- The Cambridge Digital Minds Fellowship is a week-long residential program designed to build capacity in the digital minds space, combining technical and philosophical foundations, societal and policy thinking, and structured support for project development.

- Forethought, one of the leading organizations in this field, has stated an intention to run fellowships. It’s worth checking their careers page, where these opportunities may be listed.

- The Tarbell Fellowship is a year-long programme for early-career and senior journalists interested in covering key questions in artificial intelligence. Fellows receive a stipend, a nine-month placement at a major newsroom, participate in a 10-week course covering AI and journalism fundamentals, and attend a weeklong journalism summit.

- Centre on Long-Term Risk’s Summer Research Fellowship is an opportunity to work on research questions relevant to reducing suffering in the long-term future, with a focus on risks posed by AI.

- The Future Impact Group Fellowship is a part-time research fellowship pairing fellows with project leads across three priority areas: AI policy, philosophy for safe AI, and AI sentience.

Communities

These are communities you might find helpful for networking and upskilling within this cause area:

- The AI Alignment Forum is a discussion board for many matters related to AI. Though primarily focused on alignment and safety, questions raised in this article are also discussed there. It’s open to anyone, and has frequent activity from professionals in the field.

- The communities page run by AISafety.com has a large range of listings. Though most are focused on AI safety, some will likely have overlap with the issues covered here.

Courses

These are courses that may be particularly good ways to upskill within this cause area:

- Introduction to Digital Minds by Cambridge Digital Minds is an 8-week online course exploring the perceptions and plausibility of digital minds—and what this may mean for ethics and society.

- BlueDot Impact runs multiple free courses related to issues surrounding advanced AI. You’ll complete the courses alongside a cohort of others, but they’re run very regularly. Their Intro to Transformative AI may be a good place to start, particularly the “promise of AI” section.

You can also explore

- Our articles on AI Safety & Governance and Broad Societal Improvements.

- Forethought’s many research reports on the issues discussed in this article. Additionally, the Forethought Podcast covers a range of topics related to their research.

- Digital Minds: A Quickstart Guide provides an accessible overview of the topic.

- Epoch AI’s AI benchmarking reports.

- Nick Bostrom & Matthew van der Merwe – How Vulnerable is the World?

- 80,000 Hours’ podcast interviews with Robert Long, Will MacAskill, and Rose Hadshar.

- 80,000 Hours’ article on the moral status of digital minds.